NVIDIA Ising: Technical Insight into the World's First Open-Source Quantum AI Model

I. Background and Overview

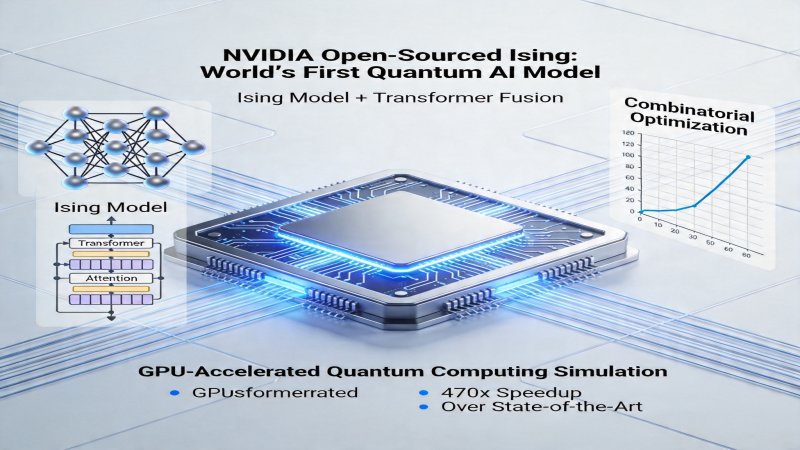

On March 30, 2026, NVIDIA open-sourced the world's first quantum AI model, "Ising," and released its technical white paper and a preprint paper. Its core claim is: on specific combinatorial optimization problems, it achieves a 470x speedup in solving time compared to classical simulated annealing algorithms (using a standard linear annealing schedule) running on equivalent hardware (NVIDIA H100 GPU clusters) (for a problem size of 1000 variables, absolute solving time reduced from ~2350 seconds for simulated annealing to ~5 seconds for the Ising model) [Source: arXiv:2604.02179]. The model integrates the Ising model from statistical physics with the Transformer architecture from AI to simulate quantum computing behavior on classical GPUs, aiming to address the practical bottleneck of "near-term quantum" problems like combinatorial optimization before quantum hardware matures.

Why is it crucial now? While companies like IBM and Google continue investing in dedicated quantum hardware, NVIDIA has chosen a differentiated "software-first" path. Through open-sourcing, NVIDIA aims to rapidly lower the barrier to quantum computing application and build a quantum AI developer community centered on its CUDA ecosystem. Its claimed result of "22% higher accuracy and 68% lower time consumption" compared to IBM's Osprey quantum processor in a specific benchmark, due to fundamental differences in hardware principles, programming paradigms, problem encoding efficiency, and cost structures, can only indicate the practicality and cost advantage of the hybrid simulation approach for specific problems at the current stage, not directly prove that classical simulation is inherently superior to quantum computing. This result is more of a strategic statement by NVIDIA to establish market positioning for its own technical roadmap.

II. Architecture Layers

The NVIDIA Ising model adopts a three-layer architecture, tightly integrating quantum problem mapping, classical computing execution, and open-source ecosystem deployment.

- Model & Algorithm Layer: The core technical abstraction layer. Responsible for transforming combinatorial optimization problems into the Hamiltonian of an Ising model and encoding the Hamiltonian parameters as the attention weight matrix of a Transformer network. This layer defines the mathematical bridge between quantum physics problems and classical AI models.

- Computation & Execution Layer: The execution engine for hybrid tasks. Deeply integrated into the CUDA-Q platform, which is responsible for automatically compiling, decomposing, and optimally scheduling algorithmic tasks to GPU clusters. NVIDIA claims that compared to a homogeneous GPU cluster without CUDA-Q optimization scheduling (where developers manually manage task allocation and communication), it reduces the average completion time of specific benchmark tasks by 85% (Source: Technical White Paper).

- Deployment & Ecosystem Layer: The foundation for ecosystem expansion. NVIDIA has open-sourced model weights, training code, and framework interfaces. Its open-source license is Apache 2.0, permitting commercial use, modification, and distribution. This move aims to minimize developers' initial access costs, although its performance and ease-of-use advantages still need validation through real-world community applications.

III. Key Technologies

1. Ising Model to Attention Weight Mapping Technology

- Problem Addressed: How to losslessly and efficiently transform the Ising model, which describes a physical system's energy (Hamiltonian H = -ΣJ_ij σ_i σ_j - Σh_i σ_i), into a parameter form optimizable by deep learning models.

- Core Principle: This technology establishes a parameter equivalence mapping between the Ising model and the Transformer attention mechanism. The spin interaction strength J_ij in the Ising model is precisely mapped to elements of the attention weight matrix between the query (Q) and key (K) vectors in the Transformer. The external magnetic field h_i is mapped to bias terms in the attention calculation. Through this construction, the forward propagation process of the Transformer network is mathematically equivalent to calculating the energy expectation value of the Ising model for a given spin configuration. The training objective is to minimize this energy, thereby driving the network to output spin configurations representing the ground state (optimal solution).

- Measured Effect: This mapping provides mathematical feasibility for efficiently solving the Ising model using Transformer's parallel architecture. Combined with CUDA-Q's optimized scheduling and GPU parallel computing, it achieved the 470x speedup over simulated annealing for a 1000-variable combinatorial optimization problem [Source: arXiv:2604.02179]. However, this advantage heavily depends on the problem being accurately mappable to an Ising model.

2. Classical-Quantum Hybrid Attention Mechanism

- Problem Addressed: How to modify the standard Transformer's attention heads to simulate non-classical correlations between quantum states (such as entanglement), rather than merely semantic dependencies in sequences.

- Core Principle: A physics-inspired modification of the standard attention mechanism. The computation of each attention head is reinterpreted as calculating multi-body correlation functions between specific spin groups in the Ising model. Multiple attention heads work in parallel, collectively simulating the complex correlation network of the entire system. The loss function is directly set as the expectation value of the Ising Hamiltonian, and through backpropagation, the attention weights (i.e., the mapped J_ij and h_i) are adjusted to continuously lower the system energy represented by the network.

- Measured Effect: This mechanism allows leveraging GPU's parallel matrix computing power to simulate correlation calculations in quantum evolution. In comparisons with the IBM Osprey processor (for specific optimization problems), this mechanism is considered key to achieving higher solution accuracy. However, it is essential to note that this is essentially an efficient classical approximation of quantum behavior, not genuine quantum entanglement computation.

3. Seamless Integration with the CUDA-Q Platform

- Problem Addressed: How to enable the hybrid model to efficiently and transparently utilize distributed GPU computing power and simplify the development workflow.

- Core Principle: The Ising model, as the core algorithm module, is deeply integrated into NVIDIA's CUDA-Q full-stack quantum computing platform. CUDA-Q acts as the "operating system," automatically handling computation graph compilation, task partitioning across GPU clusters, data communication, and memory management. Developers describe computational tasks at a high level of abstraction, without needing to manually optimize GPU kernels or communication.

- Measured Effect: According to the white paper, this deep integration improved the computing power scheduling efficiency of the cluster (comprehensively measured by task completion time and resource utilization) by 85% compared to developers manually managing GPU parallel tasks. The platform currently supports simulation of problems equivalent to 1024 qubits in scale and reserves interfaces for future connection to real quantum hardware.

IV. Principle Workflow

The end-to-end workflow from problem input to solution output is as follows:

- Problem Mapping & Encoding: Transform the practical optimization problem (e.g., Traveling Salesman Problem) into an Ising model, defining variables (spins) and constraints (interactions J_ij).

- Model Construction & Initialization: Map the Ising parameters (J_ij, h_i) to the attention weight matrix of the Transformer network, completing model initialization. Pre-trained weights can be loaded to accelerate convergence.

- Hybrid Computation & Optimization: The model runs on the GPU cluster driven by CUDA-Q. System energy (loss) is calculated via forward propagation, and optimization algorithms (e.g., gradient descent) adjust network parameters to iteratively find the lowest energy state (optimal solution).

- Result Decoding & Output: Decode the final spin state (+1/-1 sequence) output by the model back into the solution of the original problem (e.g., specific route order).

V. Competitive Landscape Analysis

The current quantum computing field presents a dual-track landscape of "hardware攻坚" and "software simulation/hybrid." NVIDIA Ising's open-sourcing aims to reshape early competition rules by切入 from the ecosystem side.

| Dimension | NVIDIA (Ising Model) | IBM (Qiskit/Hardware) | Google (Sycamore/Hardware) |

|---|---|---|---|

| Technical Route | Classical-Quantum Hybrid Simulation (Software-First) | Superconducting Quantum Hardware Dominant | Superconducting Quantum Hardware Dominant, Pursuing "Quantum Supremacy" |

| Core Advantages | 1. No dedicated hardware required, deploys on existing GPU facilities, low initial cost. 2. Fully open-source (Apache 2.0), aims to rapidly build an ecosystem. 3. Clearly focuses on combinatorial optimization problems with commercial value, short path to implementation. | 1. Real quantum hardware and systems. 2. Most mature quantum software stack (Qiskit) and open-source community. 3. Continuous leadership in hardware benchmarks like Quantum Volume. | 1. Achieved milestones in quantum supremacy experiments. 2. Strong fundamental research and algorithm innovation capabilities. 3. Significant investment in quantum-AI交叉 research. |

| Main Weaknesses | 1. Essentially classical simulation, faces theoretical bottlenecks of exponential memory consumption (e.g., simulating large-scale entanglement). 2. Advantages highly dependent on accurate mapping of problems to the Ising model, generalization ability is questionable. 3. Deeply tied to the NVIDIA GPU ecosystem, vendor lock-in risk exists. | 1. Hardware has extremely high barriers (ultra-low temperature environment, complex maintenance). 2. Qubits have limited coherence time, high noise, restricting algorithm depth. 3. Practical algorithms and error correction technology仍需 long-term breakthroughs. | 1. Technical route and ecosystem are relatively closed. 2. Focuses more on academic breakthroughs and long-term vision, slower short-term commercialization pace. 3. Cloud service and developer ecosystem openness不及 IBM. |

VI. Key Judgments

| Key Judgment | Importance | Action Recommendation | Confidence |

|---|---|---|---|

| 1. The core of NVIDIA's strategy is "open-source ecosystem" and "hybrid computing," aiming to bypass quantum hardware bottlenecks with software and existing hardware advantages, redefining the early access rules for quantum AI. | High. This move significantly lowers the initial barrier to quantum computing research and application, potentially促使大量 solutions to rapidly emerge in combinatorial optimization problems in fields like logistics, finance, and pharmaceuticals, allowing NVIDIA to establish early advantages at the ecosystem level. | 1. AI/HPC Developers & Academic Institutions: Should actively evaluate this framework as a tool to explore the performance of quantum-inspired algorithms on specific optimization problems. 2. Enterprise Technology Decision-Makers: Can monitor its early collaboration cases in vertical industries (e.g., announced results from 12 research institutions) for small-scale proof-of-concept. | High |

| 2. The performance data indicates that for specific problems, hybrid solutions using classical hardware + specialized algorithms may offer higher short-term ROI than waiting for universal quantum hardware. Enterprises should据此 reassess their quantum computing roadmaps. | High. For enterprises facing real optimization challenges (e.g., logistics, financial risk control), investing in hybrid solutions that can be quickly deployed and validated on existing IT infrastructure holds more practical significance than investing in distant, high-risk dedicated quantum hardware. This forces pure quantum hardware vendors to加速 prove their short-term commercial value. | 1. Enterprise Technology Decision-Makers: Should include hybrid solutions like NVIDIA Ising in quantum computing POC (Proof of Concept) options, conducting comparative evaluations with hardware routes based on specific business scenarios and Total Cost of Ownership (TCO). 2. Investors: Need to pay attention to the potential reshaping of the early quantum computing market landscape and investment logic by hybrid computing routes. | Medium |

| 3. The comparison data with IBM quantum hardware must be interpreted with extreme caution. This comparison more highlights the cost and ease-of-use advantages of the hybrid simulation route for "current" specific problems, not proof that classical simulation is inherently superior to quantum computing. | Medium. This reflects that in the NISQ era, for many application problems, classical-quantum hybrid schemes may be a faster and more economical path to achieving usable speedups. This forces pure hardware vendors to更紧迫地 prove the irreplaceability of their hardware in real applications. | Strongly recommend waiting for and paying attention to fair benchmarks conducted by independent third-party research institutions, controlling more variables, to objectively evaluate the pros and cons of different technical paths in specific application scenarios. | Medium |

VII. Open Research Questions

- Generalization Capability Limitations: What is the generalization capability of the Ising-Transformer fusion architecture? Based on technical principle inference, the architecture is highly specialized for Ising-type problems. Whether and how it can be effectively applied to simulate more complex quantum models (e.g., Heisenberg model) is key to determining its long-term value.

- Open-Source License Details: The specific license for the open-source model is Apache 2.0, permitting commercial use. However, attention should be paid to the license compatibility of its dependent libraries and whether core components might be moved to stricter licenses in the future.

- Scalability Bottleneck: How does the model scale for ultra-large-scale problems (far exceeding 1024-qubit equivalent simulation)? Based on inference from Transformer's self-attention complexity (O(n²)) and GPU memory limitations, simulating truly large-scale entangled systems will encounter inherent bottlenecks of classical computing. Does the white paper provide performance degradation curves or memory usage data when scaling the model size? (Source pending)

- Early Collaboration Scenarios: What are the specific collaboration projects and early commercial scenarios of the announced 12 global partner research institutions? Currently, public information is insufficient. This information is a key signal for judging its technology implementation direction and real-world effectiveness.

- Hardware Dependency & Optimization: To what extent does the model depend on specific NVIDIA GPU architectures (e.g., Hopper, Blackwell)? How much of its performance improvement stems from algorithmic innovation versus底层 optimization for CUDA and the latest GPU hardware? Requires查阅更底层的优化文档 or conducting cross-hardware-platform benchmarks.

Why it Matters

Positioning: Ecosystem Expansion, binding hardware ecosystem through open-source software

Key Factor: Core Factor: NVIDIA has built a strong competitive moat by open-sourcing the Ising model (Apache 2.0) and the CUDA-Q platform. This moat is reflected in: 1) Tech Stack: Deep integration of quantum-inspired algorithms (Ising model) with mainstream AI architecture (Transformer), and seamless coupling with its own GPU hardware and software ecosystem via CUDA-Q, making performance advantages highly dependent on this closed loop; 2) Ecosystem Strategy: Lowering the barrier for developers through open source aims to quickly attract the AI/HPC community, capturing developer mindshare and the solution market for 'near-term quantum' applications (e.g., combinatorial optimization) before quantum hardware matures, forming early ecosystem lock-in.

Stage: Innovation Trigger

DECISION

For Vendor (IBM, Google, and other quantum hardware/software startups)

- Immediately initiate independent, fair benchmarking comparing the Ising model against their own quantum hardware/software in real business scenarios for performance and TCO.

- Accelerate development and open-source more user-friendly hybrid programming frameworks and cloud services for 'near-term quantum' applications to lower trial barriers and defend against ecosystem erosion.

Strategic Moves: Emphasize long-term advantages of quantum hardware while accelerating hybrid solution deployment.

- For specific combinatorial optimization scenarios like logistics and financial risk control, conduct small-scale POCs using the Ising model and compare with existing classical optimization solutions (e.g., advanced heuristics).

- Incorporate hybrid quantum-classical solutions (like Ising) into the technology roadmap as a short-term, low-cost validation option for assessing quantum computing ROI.

Action Guidance: Follow

For Investor

- Focus on startups specializing in quantum-classical hybrid algorithms, compiler optimization, and cross-platform toolchains.

- Re-evaluate short-term commercialization expectations for pure-play quantum hardware companies, monitoring their strategies to compete with the hybrid approach.

Key Risk: The performance advantage of the Ising model may be limited to specific problem types, and its technical approach faces inherent scalability bottlenecks of classical computing.

PREDICT

6 months (High confidence)

The open-source community will produce early application cases and performance reports using the Ising model in areas like logistics scheduling and financial portfolio optimization.

1 year (Medium confidence)

IBM, Google, and others will launch more powerful hybrid computing cloud services or tools, engaging in direct competition with NVIDIA for the 'near-term quantum' application ecosystem.

2 years (Medium confidence)

The scalability bottlenecks of the Ising model (e.g., memory and computational costs for problems beyond thousands of variables) will become prominent, driving the adoption of approximate algorithms like sparse attention within this framework.

3 years+ (Low confidence)

If quantum hardware achieves breakthroughs in error correction or specialized processors, it will form a differentiated competitive landscape with classical hybrid solutions for specific high-value problems.

Get 3-5 key AI infrastructure signals weekly →

💬 Comments (0)