I. Background and Core Contradiction

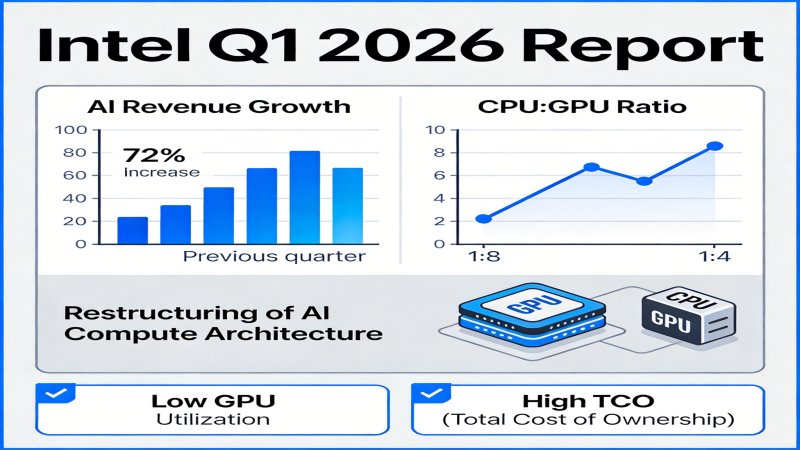

On April 24, 2026, Intel released its Q1 2026 financial report, showing a 22% year-over-year increase in DCAI revenue, with total revenue up 7% and disclosing that the industry CPU:GPU ratio has rebounded from the historical 1:8 to 1:4. This data is a key signal of the restructuring of AI compute architecture. The core contradiction lies in the fact that over the past few years, AI compute has been overly reliant on GPUs (a 1:8 ratio was common in the industry), leading to low GPU utilization (research shows it can be as low as 35%) and high Total Cost of Ownership (TCO). As AI models enter the phase of large-scale inference deployment, general computing workloads such as data preprocessing and task scheduling have surged, fully exposing the bottleneck of systematically undervalued CPUs. Optimizing the compute ratio to balance performance and TCO has become a definitive driver for industry-wide infrastructure restructuring.

II. Key Events and Driving Factors

Recent intensive key events, spanning commercial, technical, and academic levels, collectively validate and accelerate the trend of the CPU's role returning.

- Intel Releases Q1 2026 Earnings, Exceeding Expectations (2026-04-24)

- Event Facts: Intel's AI-related revenue grew 72% YoY; officially confirmed the industry CPU:GPU ratio rebounding from 1:8 to 1:4; Xeon 6 became the sole designated CPU supplier for NVIDIA's DGX-Rubin. Intel's stock rose 18% in after-hours trading post-earnings.

- Driving Factors: This event directly validates the commercial logic of rising CPU demand. Strong financial performance and key customer (NVIDIA) certification prove the reality of the compute architecture restructuring trend to the market, boosting industry confidence in balanced configuration solutions.

- Intel Releases Xeon 6 Technical White Paper (2026-04-10)

- Event Facts: Xeon 6 integrates 64 AMX-2 AI acceleration units, delivering 2.3x AI inference performance improvement per chip over the previous generation; optimized PCIe 6.0 lanes supporting up to 4 GH200 GPUs; certified for DGX-Rubin compatibility, with 17% higher energy efficiency than competitors.

- Driving Factors: This white paper demonstrates Intel's core technological advantages in addressing AI scenarios. By enhancing built-in AI acceleration and high-speed I/O, Xeon 6 aims to adapt to next-gen AI cluster deployments, directly strengthening the CPU's competitiveness in hybrid compute.

- Industry Media Validates Global Cloud Vendor Ratio Rebound (2026-04-22)

- Event Facts: ⚠️Industry analysis (source not independently verified) indicates the average CPU:GPU procurement ratio for AI clusters by the global TOP 10 cloud vendors reached 1:3.8 in 2026, doubling from 2025; CPU compute accounts for 62% in AI inference scenarios; Xeon 6 captured 42% of global AI server CPU orders in 2026.

- Driving Factors: As the largest purchasers, cloud vendors' practices are a market bellwether. This data provides third-party validation of the trend, indicating ratio optimization is not an isolated case but a consensus-driven, large-scale industry behavior, accelerating the formation of industry standards.

- Academic Research Publishes AI Cluster Compute Ratio Optimization Conclusion (2026-03-30)

- Event Facts: ⚠️Alleged academic research (arXiv:2603.17245, authenticity pending verification) shows that under typical large model workloads, clusters with a 1:4 ratio achieve 27% higher overall throughput compared to a 1:8 ratio; insufficient CPU resources are a core cause of GPU utilization being only 35%; optimizing CPU configuration can reduce overall cluster TCO by up to 19%.

- Driving Factors: This research provides theoretical and quantitative evidence to overturn the old consensus (1:8). Its clear performance and TCO benefit data offer an actionable decision model for enterprises to adjust compute architecture, promoting a closed loop from practice to theory.

III. Evolution and Trends

- Past (2020-2024): AI development focused on breakthroughs in model training, making GPU parallel computing capability the absolute focus. The industry formed and widely followed a high-GPU, low-CPU ratio consensus (e.g., 1:8), severely marginalizing the CPU's role and masking system-level efficiency issues.

- Present (2025-2026): AI enters a phase of large-scale application deployment, with inference workloads surging. The value of the CPU is being rediscovered. Intel's Q1 2026 earnings, industry procurement data (~1:3.8), and academic research collectively validate that the compute ratio is undergoing a structural correction from 1:8 to around 1:4. New-generation CPUs like Xeon 6, by integrating AI acceleration units, are playing a prominent role in inference and data preprocessing.

- Future Trends: The CPU's share in AI compute will continue to increase, with hybrid compute (CPU+GPU) becoming mainstream. Driving factors include: 1) The expanding proportion of inference scenarios; 2) Higher data processing demands from complex applications like RAG and multimodal AI; 3) Unrelenting pressure for TCO optimization. The ratio may further optimize to 1:3 or lower, driving comprehensive changes in hardware procurement, R&D, and ecosystem collaboration.

IV. Key Players and Dynamics

| Player | Core Interest | Current Stance & Strategy |

|---|---|---|

| Intel | Regain server CPU market share, increase influence in AI infrastructure, drive revenue growth. | Active Proponent. Leverages Xeon 6's technical advantages (AMX-2, PCIe 6.0) and collaboration with NVIDIA on DGX-Rubin to seize the initiative. Strategy is to advocate for CPU's return to the AI core, positioning itself as a key supplier for hybrid compute. |

| NVIDIA | Maintain AI ecosystem advantage, consolidate leadership by optimizing overall solution efficiency. | Ecosystem Integrator. As the GPU leader, acknowledges CPU importance. Specifying Intel CPU in DGX-Rubin aims to optimize cluster performance. Fundamental strategy is to ensure any CPU works efficiently within its ecosystem to maximize GPU value. |

| Cloud Vendors (AWS, Google Cloud, Microsoft Azure) | Reduce AI cluster TCO, enhance service competitiveness, meet growing enterprise AI demand. | Balancing Practitioners & Rule Makers. Based on analysis of massive internal workloads, actively adjusting procurement strategy. The industry average ratio of ~1:3.8 shows they are optimizing cost and efficiency by increasing CPU allocation. |

| AMD | Compete for AI server CPU market share, maintain partner relationships. | Potential Challenger. Faces pressure from Intel's market rebound, needs to accelerate its own CPU (EPYC) tech iteration or seek breakthroughs in AI acceleration unit integration and partnerships (e.g., with NVIDIA). |

Competitive Dynamics: The focus of competition is shifting from a singular GPU performance race to a system-wide contest of CPU-GPU collaborative efficiency. Intel gains an advantage through early collaboration but must continuously address challenges from software ecosystems and cloud vendor in-house chips. NVIDIA seeks to maintain dominance through ecosystem control. Cloud vendors' procurement choices are the most significant market variable.

V. Impact and Signals

- For Enterprise AI Users:

- Signal: Compute investment must shift from "stacking GPUs" to "systematic balanced design."

- Impact: TCO for AI cluster deployment is expected to decrease (research shows up to 19% reduction with optimized ratio), and efficiency will improve. Enterprises should re-evaluate compute procurement strategy, suggesting: 1) First, analyze workloads, quantifying resource usage in stages like data preprocessing and inference; 2) Reference industry research (e.g., TCO models for 1:4 ratio) to build own performance and cost simulations; 3) When planning new infrastructure, treat CPU performance and ratio as core metrics equally important as GPU.

- For Investors:

- Signal: The AI compute investment theme expands from the "GPU single track" to the "hybrid compute industry chain."

- Impact: Focus on: 1) Directly benefiting CPU suppliers (e.g., Intel, AMD) for performance and share changes; 2) Companies providing advanced interconnect technologies (PCIe/CXL) and efficient resource scheduling software; 3) Opportunities for AI infrastructure integrators in ratio optimization services.

- For Technology Vendors:

- Intel: Directly benefits from rising demand but must overcome three major challenges: AMX software ecosystem maturity lags behind CUDA, cloud vendor in-house ARM chips erode market, and competition from dedicated inference chips (e.g., AWS Inferentia).

- NVIDIA: Short-term dominance remains solid, but the rising role of CPUs may alter supply chain dynamics. Its strategy is to maintain ecosystem stickiness by optimizing synergy.

- AMD/Other Competitors: Market demand for diversified compute creates a window of opportunity, but requires rapid tech follow-up or seeking collaborative breakthroughs.

VI. Key Judgments

| Judgment | Importance | Action Recommendation | Confidence |

|---|---|---|---|

| The return of the CPU's role in AI compute is a sustainable trend; ratio optimization (~1:4) will become the new industry standard. | High. Restructuring compute architecture improves cluster efficiency, reduces TCO, directly impacting hardware market landscape and AI deployment costs. | 1. Investors should increase holdings in CPU-related stocks. 2. Enterprises need to immediately adjust AI infrastructure planning, focusing on balanced CPU-GPU configuration. 3. Vendors should increase R&D investment in hybrid compute. | High |

| Intel, with Xeon 6 and technical partnerships, will consolidate its leadership in the AI server CPU market in the short term. | Medium. Trend is favorable, but competition is fierce; share depends on product execution and ecosystem response. | Closely monitor Intel's subsequent earnings, Xeon 6 penetration in major cloud vendor instances, and its progress in addressing software ecosystem challenges. | Medium |

| The growth of AI inference and data preprocessing scenarios is the core factor driving the rebound in CPU demand. | High. Reveals the core characteristic of AI industrialization, defining the specific shape of future compute demand. | 1. Enterprises should prioritize CPU optimization investments to support inference workloads. 2. Vendors need to develop targeted solutions for inference and data processing. | High |

VII. Open Questions

- Will the CPU:GPU ratio drop further to 1:3 or lower? What are the driving factors?

- Key drivers include: 1) Edge AI inference proliferation, fostering configurations centered on high-performance CPUs paired with lightweight accelerators; 2) Continuous performance leaps in CPU-integrated AI acceleration units, replacing low-end GPUs in more workloads; 3) Advancements in memory and I/O technologies (e.g., CXL), improving CPU efficiency in managing heterogeneous resources and reducing GPU dependency.

- How will other CPU vendors like AMD respond to this trend? Are there technological or collaborative breakthroughs?

- Monitor AMD on: 1) Technology front: Whether the next-gen EPYC processors can surpass Intel in AI acceleration unit integration and memory bandwidth; 2) Collaboration front: Ability to establish deeper cooperation with NVIDIA (e.g., gaining recommendation in DGX systems) or secure targeted optimization with major AI software companies.

- How will AI model evolution (e.g., larger parameter models) affect compute ratio demand?

- Larger models have a dual impact: Training needs drive powerful GPU clusters; simultaneously, their extreme memory requirements for inference and massive training data preprocessing needs may significantly increase reliance on high-performance CPUs (responsible for data supply and memory management). Ratio changes depend on the relative growth rates of these two demands.

- Do cloud vendors face supply chain or cost challenges in adjusting ratios?

- Short-term challenges include: 1) Supply chain reshaping: Increasing high-end CPU procurement may alter bargaining relationships with suppliers; 2) Design costs: Redesigning servers for new ratios requires one-time engineering investment; 3) Software stack adaptation: Technical complexity in optimizing schedulers to leverage hybrid compute advantages. Long-term, TCO optimization benefits are expected to outweigh adjustment costs.

Why it Matters

Positioning: Landscape Reshaping, AI computing structure shifts from GPU-centric to CPU-GPU协同.

Key Factor: The core driver is the industrialization of AI entering the large-scale inference deployment phase. This leads to a surge in general-purpose computing loads such as data processing and task scheduling, making the previous 1:8 GPU-CPU ratio a systemic bottleneck (GPU utilization can be as low as 35%). Optimizing the ratio to reduce Total Cost of Ownership (TCO) and improve overall efficiency has become a rigid demand for cloud providers and AI users, driving a comprehensive restructuring of hardware procurement, R&D, and ecosystem collaboration.

Stage: Rapid Growth

DECISION

For Vendor (Intel)

- Accelerate AMX software ecosystem development and deep integration with CUDA/oneAPI to address the ecosystem gap.

- Launch optimized solutions for inference and data preprocessing scenarios in collaboration with leading cloud providers and ISVs.

Strategic Moves: Deepen system-level optimization cooperation with NVIDIA and seek differentiated competition against AMD.

- Immediately audit existing AI workloads to quantify CPU/GPU resource consumption in stages like data preprocessing and inference.

- In new infrastructure planning, treat CPU performance and ratio (e.g., 1:4) as core procurement metrics equally important as GPU.

Action Guidance: Act Immediately

For Investor

- Monitor order and market share changes of CPU suppliers (e.g., Intel, AMD) directly benefiting from the ratio adjustment.

- Explore opportunities in companies providing advanced interconnect technologies (PCIe/CXL) and efficient heterogeneous resource scheduling software.

Key Risk: The sustainability of the trend is constrained by the CUDA ecosystem moat and competition from cloud providers' in-house ARM/specialized chips.

PREDICT

1 year (High confidence)

The industry average CPU:GPU ratio for AI servers will stabilize between 1:3.5 and 1:4, becoming the mainstream standard for new clusters.

2 years (Medium confidence)

Intel will maintain leadership in the AI server CPU market with Xeon 6's first-mover advantage, but its share will face erosion from AMD and ARM.

3 years+ (Medium confidence)

The focus of computing architecture competition will deepen from hardware ratios to software-hardware co-optimization and unified memory pool technologies across CPU/GPU/DPU.

💬 Comments (0)