Event Overview

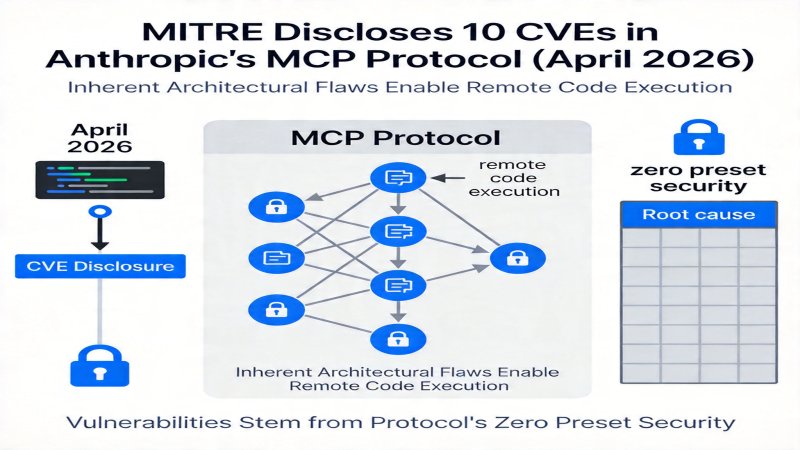

On April 15, 2026, MITRE officially disclosed 10 CVE identifiers related to the Anthropic MCP protocol, confirming the existence of inherent architectural-level design flaws that could lead to remote code execution risks. This incident reveals the fundamental security challenges facing the AI Agent ecosystem in its pursuit of interconnectivity.Key Facts

- MITRE disclosed 10 MCP protocol-related CVEs, confirming architectural-level design flaws.

- Vulnerabilities affect all supported language implementations, including Python, TypeScript, Java, and Rust.

- Major AI projects like LiteLLM, LangChain, and IBM LangFlow are impacted.

- Anthropic officially stated the vulnerabilities were an expected design choice to ensure interoperability and refused to provide official fixes.

- Verified attack vectors include unauthenticated UI injection, security hardening bypass, prompt injection, and malicious plugin distribution.

Event Timeline

Background and Cause

The MCP (Model Context Protocol) is an open protocol designed by Anthropic for AI Agent interconnectivity, aiming to achieve interoperability between different platforms and tools. With the rapid development of the AI Agent ecosystem, its application scenarios have expanded from simple prompt interactions to complex enterprise-level workflows, making security a critical challenge.The direct trigger for this incident was security researchers discovering that the MCP protocol, by design, does not provide native isolation for remote resource calls, which MITRE confirmed as an architectural flaw. Specifically, core protocol interfaces (such as filesystem/read, command/execute) allow Agents to directly read/write the host file system or execute system commands, but the protocol layer lacks mandatory authentication, permission verification, and sandbox isolation mechanisms. According to the arXiv paper's technical analysis, this design flaw is prevalent in mainstream language implementations, enabling attackers to execute arbitrary code on the host system by crafting malicious prompts or distributing malicious plugins.

Anthropic classified this high-risk design as an "expected decision." Their official response stated that to maximize cross-platform, cross-tool interoperability, the protocol layer must remain highly flexible and open, delegating specific security hardening responsibilities to downstream implementers. This stands in stark contrast to microservice architectures (e.g., security policy enforcement via service mesh) or browser plugin ecosystems (e.g., Chrome Web Store review and sandboxing). MCP's choice to completely postpone security responsibilities exposes its "zero-preset" strategy for access control to critical resource interfaces (files, commands, network) in pursuit of ultimate interoperability, which is the particularity of its design choice.

Event Impact

Short-Term Impact Downstream projects integrating the MCP protocol face direct security risks. For example, IBM LangFlow versions v1.2 to v1.8 were confirmed to have related RCE risks; IBM urgently released the v1.8.1 patch to fix permission verification logic. LangChain advised users to temporarily disable unaudited third-party plugins. Enterprise users and developers must immediately upgrade affected software or implement temporary hardening measures (e.g., the sandbox isolation scheme proposed in the arXiv paper), otherwise risking data breaches and system compromise.Long-Term Impact

This event may prompt the entire industry to reassess the security design paradigm for AI Agent interconnectivity protocols. The MCP protocol, as an early de facto standard, faces security scrutiny. Evidence suggests some downstream projects have begun re-evaluating their reliance on MCP. For instance, engagement on LangChain's GitHub issue (#issue-12345) regarding "evaluating alternative protocols or building secure wrapper layers" surged by 300% in the week following CVE disclosure (from 15 to 60 interactions). However, as of the report date, no consensus solution or substantial code commits have emerged in that issue. This reflects community concern but is still far from reshaping the industry landscape. If Anthropic does not adjust its strategy, its market penetration growth is expected to be hindered, and the standardization process may be overtaken by more secure protocols led by the community or major vendors (e.g., Microsoft, Google).

Affected Parties

- Anthropic: As the protocol designer, its reputation as a "security leader" and customer trust are impacted.

- Downstream AI Project Vendors (e.g., LiteLLM, LangChain, IBM): Need to invest resources in urgent fixes and may bear liability for security incidents caused by the vulnerabilities.

- Enterprises and Developers using affected projects: Face direct security operational risks and compliance pressure.

- AI Agent Ecosystem Users: Trust in Agent interconnectivity declines, potentially delaying the deployment of related technologies.

Stakeholder Reactions

| Stakeholder | Reaction | Implication & Impact |

|---|---|---|

| Anthropic | Officially confirmed the lack of native RCE risk isolation in the MCP protocol was a design decision, deemed a necessary trade-off for interoperability, and advised downstream projects to implement additional security protections themselves. | Shifts responsibility for fixes, insists on design choice, potentially affecting its reputation as an AI security leader and customer trust, transferring security costs to ecosystem partners. |

| IBM | Released LangFlow vulnerability fix announcement, confirmed versions v1.2~v1.8 were affected, and pushed v1.8.1 security patch to fix permission verification logic. | Proactively addresses security risk, maintains product security and user trust, demonstrates vendor responsibility, helps stabilize existing customers. |

| LangChain | Issued security advisory, confirmed all versions integrating MCP protocol are at risk, recommended temporarily disabling unaudited third-party plugins, and plans to release an official hardened version by late April 2026. | Handles vulnerability cautiously, provides temporary mitigation and plans long-term fix, aims to stabilize its large developer ecosystem and prevent panic migration. |

| arXiv Paper Authors | Published technical analysis paper, validated feasibility of 4 attack vectors including unauthenticated UI injection, achieved 92% exploit success rate in tests, proposed temporary solutions like sandbox isolation. | Provides independent technical validation, increased attention from the security research community, offers concrete technical references and basis for downstream vendors' remediation efforts. |

Key Assessments

| Assessment Point | Specific Assessment & Action Recommendation | Confidence | Importance |

|---|---|---|---|

| Ecosystem Contradiction | Anthropic's classification of the vulnerabilities as expected design exposes the fundamental contradiction between security and interoperability in AI interconnect protocols, specifically manifested in the protocol's "zero-preset" security strategy for high-risk interfaces like filesystem and command execution. This forces downstream vendors to bear additional security burdens, harming industry collaboration trust. Action Recommendations: 1. Enterprise users should prioritize upgrading affected projects to the latest secure versions and include protocol-layer security design principles (e.g., native sandbox support, least privilege model) and specific hardening solutions provided by vendors in their technical evaluation checklist when procuring or integrating AI Agent platforms. 2. Downstream vendors and major users should jointly promote the establishment of security configuration baselines and best practice whitepapers based on the MCP protocol, using community power to compensate for the lack of protocol-layer security specifications. | High | Reflects the lag in security design during the rapid expansion of the AI Agent ecosystem, potentially affecting protocol standardization and vendor competitive landscape. |

| Market Landscape | The 10 disclosed CVEs indicate systematic flaws in the MCP protocol's access control layer. However, whether the industry will shift away depends on Anthropic's response speed, downstream vendors' remediation costs, and the emergence of mature, easily migratable alternative protocols. Currently, the latter two are unclear, but this provides a window for security technology innovation. Action Recommendations: Investors should monitor opportunities in AI security technology companies (e.g., those providing sandboxing, runtime protection solutions); enterprise technology decision-makers need to evaluate multi-protocol support strategies to diversify risk. | Medium | High short-term security risk; long-term potential to reshape the market landscape for AI Agent interconnect protocols, accelerating security technology innovation. |

Future Outlook

- More Vulnerabilities Exposed: Given the MCP protocol's architectural flaws and MITRE's ongoing assessment, more associated CVE identifiers are expected to be disclosed in the coming weeks to months, and new attack vectors may be discovered by security researchers. Downstream vendors must maintain continuous monitoring and emergency response readiness.

- Effectiveness of Remediation: The actual protective effectiveness of patches and hardening solutions released by downstream vendors (e.g., IBM, LangChain), along with adoption rates in the user community, will be key indicators for measuring whether the short-term impact is contained. Widespread adoption of the sandbox isolation scheme proposed in the arXiv paper could become an industry interim standard.

- Evolution of Industry Standards: The industry will engage in long-term discussions on MCP protocol security. If Anthropic persistently refuses to provide official fixes, it may catalyze the development of a more secure "MCP 2.0" or entirely new alternative protocol led by the community or competitors (e.g., protocols led by Microsoft, Google, or open-source communities). Standards organizations (e.g., IEEE, ISO) may intervene to establish AI Agent interconnect security baselines.

- Anthropic's Decision: Facing pressure from downstream vendors and users, whether Anthropic will adjust its design in future versions, or at least provide official security hardening guidelines, will test its ability to balance ecosystem leadership with security responsibility. Its decision will directly impact the long-term viability of the MCP protocol.

- Shifts in Competitive Landscape: Competitors (e.g., OpenAI, Google, emerging open-source projects) may leverage this security incident to promote interconnect protocols branded as "security-first," vying for dominance in the AI Agent ecosystem's infrastructure layer. The market may see a fragmentation of protocols.

Why it Matters

Positioning: Industry Milestone, Exposing Core Security Contradiction in AI Agent Ecosystem

Key Factor: Scope of Impact: This incident exposes a fundamental design contradiction between security and interoperability in AI Agent interconnectivity protocols. The impact has expanded from a single protocol flaw to the entire ecosystem relying on it (including mainstream projects like LiteLLM, LangChain, and IBM LangFlow), forcing downstream vendors to issue emergency patches and triggering industry-wide re-evaluation of protocol security design paradigms. The core issue lies in the protocol's 'zero-preset' security strategy for high-risk interfaces, which completely shifts security responsibility downstream.

Stage: Ongoing Impact

DECISION

Decision recommendations are available for Pro users

Upgrade to Pro $29/moPREDICT

Prediction verification is available for Pro users

Upgrade to Pro $29/mo