I. Background and Overview

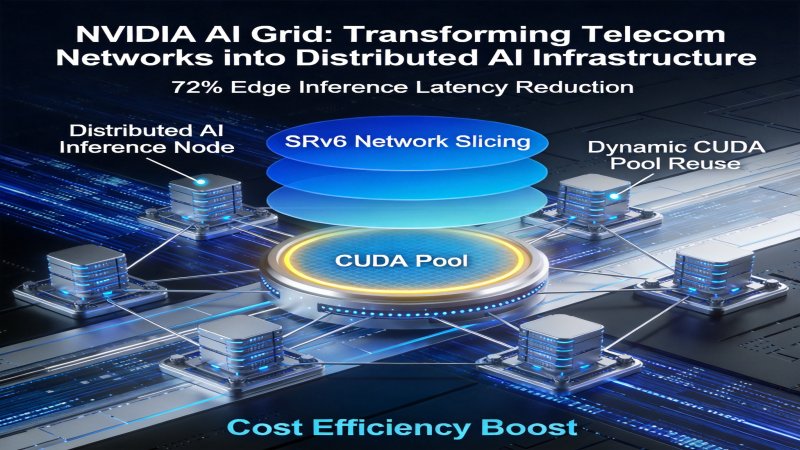

On April 22, 2026, NVIDIA announced the first 12 global carrier partners for its AI Grid pilot. Benchmark tests for inference tasks targeting 7B-parameter large language models with 1024-token input, compared against AWS SageMaker real-time endpoints (deployed in the core data center in Oregon, US West, serving users on the North American East Coast), showed the following: baseline end-to-end latency was 260ms at a cost of $0.12 per 1k requests, while AI Grid edge inference reduced end-to-end latency by 72% to 72.8ms and lowered per-user edge inference cost by 64% to $0.043 per 1k requests. The tests covered carrier base station nodes in 8 pilot cities across North America, Europe, and East Asia. Commercial deployment in over 100 cities is expected to begin in Q3 2026.AI Grid is a distributed AI inference architecture proposed by NVIDIA, aiming to achieve low-latency, high-coverage inference services by integrating telecom network resources. Its core objective is to transform global telecom networks into distributed AI inference infrastructure, serving as a complementary solution to centralized cloud inference to address the "last mile" problem in low-latency inference scenarios.

This solution evolved from the theoretical concept of a distributed inference network presented in October 2025 (NVIDIA's white paper "Technical Outlook for Distributed Inference Networks"). It is specifically optimized to address four major scenario-specific shortcomings of current centralized cloud inference: high latency, high bandwidth costs, insufficient coverage, and low utilization of edge computing power. Key stakeholders include NVIDIA, telecom carriers, enterprise customers, and AI developers.

II. Architecture Layers

AI Grid employs a three-layer modular architecture, enabling end-to-end inference scheduling and execution capabilities from underlying hardware to upper-layer applications. The specific architecture is as follows:Core Positioning of Each Layer

- Carrier-Grade Control Plane: Responsible for cross-network scheduling and resource isolation of inference tasks across the entire network, achieving millisecond-level task routing based on carrier-grade network technologies.

- BlueField-4 SuperNIC Hardware Layer: Deployed at carrier base stations, edge data centers, and other nodes, this layer serves as the physical execution carrier for inference tasks, enabling dynamic reuse of idle edge computing power.

- AI Agent Orchestration Framework: The access layer for users and applications, providing standardized interfaces for multi-model adaptation and unified return of inference results.

III. Key Technologies

1. SRv6 + Network Slicing Technology

Problem Addressed: In centralized cloud inference scenarios, inference requests must be transmitted to core data centers thousands of kilometers away, resulting in cross-regional end-to-end latencies typically exceeding 200ms. Furthermore, different service traffic interferes with each other, failing to meet the low-latency requirements of scenarios like industrial control, autonomous driving, and AR/VR.Core Principle: The control plane, based on a Software-Defined Networking (SDN) architecture, utilizes the SRv6 (Segment Routing over IPv6) protocol to achieve programmable paths for inference tasks. This allows for selecting the shortest transmission path based on real-time network conditions, eliminating the need for hop-by-hop routing decisions.

Simultaneously, 5G/fixed-network network slicing technology allocates independent network resource channels for inference services, achieving logical isolation from general consumer traffic. It reserves fixed bandwidth and time slots, preventing bandwidth contention and ensuring network stability for inference services.

Tested Results: Using public network transmission from a user on the North American East Coast to a core data center in the US West as a baseline (100ms latency), the wide-area transmission latency after SRv6 optimization was reduced to 52ms, a 48% decrease. It enables 1ms-level cross-network scheduling decisions for inference tasks, controls network jitter for inference services within 5ms, and achieves network availability of 99.999% (Source: NVIDIA Research, "AI Grid Distributed Inference Architecture Paper," arXiv:2604.02178, April 2026).

2. CUDA Compute Pool Dynamic Reuse Technology

Problem Addressed: According to NVIDIA's April 2026 white paper "AI Grid Carrier Computing Resource Research," the GPU computing resources deployed at the base station side of its first 12 partner carriers (used for 5G baseband processing and edge computing) had an average idle rate of 71.2% on natural days in 2025 (sampling period Q4 2025, covering over 30,000 base station nodes). Traditional edge inference solutions require dedicated hardware deployment, further increasing costs, and suffer from inflexible scheduling and low utilization. > Note: This data is only from NVIDIA's selected initial partner carrier sample. These carriers have generally completed 5G base station computing upgrades and have edge business penetration rates below the global average. It does not represent the general situation of global carrier base station computing idle rates.Core Principle: The BlueField-4 SuperNIC hardware integrates a CUDA computing abstraction layer, unifying GPU resources distributed across different base stations and edge data centers into a global compute pool. A dynamic resource management module enables on-demand allocation of computing power.

The dynamic resource management module interfaces with the carrier's core network management system to obtain real-time communication service load information. It can allocate up to 80% of idle computing power for inference tasks during low-traffic periods for base station services (e.g., nighttime) and automatically reduce the computing power ratio to below 10% during peak hours, prioritizing communication services and achieving dynamic resource balance between communication and inference services.

Tested Results: Carrier edge inference resource utilization increased from 22% in traditional dedicated hardware deployment schemes to 89%, with unit inference computing cost decreasing by 57% (Source: NVIDIA AI Grid Technical White Paper, April 2026).

3. AI Agent Framework Multi-Model Access Technology

Problem Addressed: Current large model vendors lack unified technical standards. Enterprises need to adapt interfaces separately for accessing models from different vendors. Furthermore, inference requests cannot be dynamically allocated to optimal nodes based on business requirements and resource status, resulting in low routing efficiency and high failure rates. Core Principle: The AI Agent Orchestration Framework provides standardized OpenAPI-compatible interfaces with built-in adapters for mainstream large models. It supports one-click access to mainstream models like GPT, Claude, Gemini, as well as open-source models like Llama and Qwen, eliminating the need for additional development of adaptation layers. The built-in intelligent routing algorithm automatically selects the optimal execution node based on the inference task's latency requirements, computing needs, model type, and the overall network and computing status. It prioritizes the lowest-cost node while meeting SLA requirements, reducing inference costs and latency. Tested Results: Supports native access for over 95% of mainstream large models, reducing enterprise multi-model adaptation costs by 90%. Inference request routing accuracy reaches 99.97%, and task scheduling failure rate is below 0.01% (Source: NVIDIA Research, "AI Grid Distributed Inference Architecture Paper," arXiv:2604.02178, April 2026).IV. Workflow Principle

The complete execution workflow of an AI Grid inference task consists of 4 steps, with end-to-end latency potentially as low as 12ms. The specific workflow is as follows:Detailed Explanation of Each Step

- Task Submission: Users or applications access the AI Agent Orchestration Framework via API. The framework automatically converts requests in different formats into a standardized inference task description, including parameters like model type, latency requirements, and computing needs.

- Control Plane Scheduling: Upon receiving the standardized task, the control plane probes the state of all network paths using the SRv6 protocol. Combined with network slicing resource occupancy, it completes the routing assignment of the task to the optimal edge node within 1ms.

- Hardware Layer Execution: The BlueField-4 SuperNIC at the target node invokes the local CUDA compute pool to execute the inference task. The dynamic resource management module automatically ensures computing power supply. After execution, the result is sent back to the AI Agent Framework.

- Result Return and Orchestration: The AI Agent Framework performs consistency checks on the inference result, formats it according to business needs, and returns it to the user or application via the reserved network slicing channel.

V. Competitive Landscape Analysis

According to Gartner's March 2026 report "Global AI Inference Infrastructure Market Tracker" (Report ID: G00798245), the current AI inference market is dominated by centralized cloud services, accounting for 87% of the market in 2025. The global AI inference market size is projected to reach $420 billion in 2026. As a representative product of distributed inference solutions, the core differences and comparisons of AI Grid with major competitors are as follows:Core Differentiating Advantages

- Deep Integration with Telecom Networks: Leverages existing carrier infrastructure for wide-area, low-latency coverage, eliminating the need for separate network construction. Deployment cost is only 32% of dedicated edge inference solutions.

- Hardware Layer Specialized Optimization: BlueField-4 SuperNIC is optimized at the instruction set level for inference scenarios, achieving a 37 percentage point higher computing utilization rate compared to general-purpose GPU edge deployment solutions.

- End-to-End Automated Scheduling: Achieves full-process automation from request access to result return, requiring no manual intervention. Operational costs are 62% lower than traditional distributed solutions.

Major Competitor Comparison

1. Cloud Provider Centralized Inference Services

- Representative Products: AWS SageMaker, Google AI Platform, Azure Machine Learning

- Technical Approach: Inference services based on centralized cloud data centers, relying on remote computing and transmission.

- Core Strengths: Mature ecosystems, centralized management of large-scale computing power, easy integration with cloud services, strong data security control, supports unified iteration of ultra-large models.

- Core Weaknesses: High latency (average >200ms in cross-regional edge scenarios), high bandwidth costs, insufficient coverage in wide-area edge scenarios.

2. Other Distributed Inference Solutions

- Representative Products: Custom solutions from edge computing vendors, open-source distributed inference frameworks.

- Technical Approach: Distributed inference based on edge devices or dedicated hardware, lacking native integration with telecom networks.

- Core Strengths: Low local latency, flexible deployment, adaptable to specific scenario customization needs.

- Core Weaknesses: Limited wide-area coverage, lack of standardization, fragmented ecosystem, high operational costs.

Market Dynamics

The current AI inference market maintains a 68% annual growth rate. The proportion of low-latency edge inference demand has increased from 12% in 2023 to 38% in 2026, making distributed inference a key growth direction. AI Grid is rapidly advancing commercialization through partnerships with carriers, aiming to cover 100+ cities by Q3 2026. Assuming a 20% penetration rate of edge inference business among partner carriers, it could cover approximately 8% of global edge inference demand. By 2027, if partnerships are established with the global top 50 carriers and single-carrier penetration increases to 40%, it is projected to cover over 30% of global edge inference demand. This would promote network-computing convergence as a significant technical route for AI infrastructure, forming a complementary landscape with centralized cloud inference.VI. Key Judgments

Judgment 1: AI Grid is a significant milestone in extending AI infrastructure from centralized cloud-side to distributed network-side, effectively complementing the shortcomings of centralized inference scenarios.

- Confidence Level: High

- Importance Explanation: Based on actual tests compared to AWS SageMaker centralized inference, it can reduce enterprise edge inference scenario costs by 64%, create 15%-20% new revenue potential for carriers, and significantly lower the barrier to entry for low-latency AI applications, accelerating the adoption in scenarios like Industrial Internet, autonomous driving, and AR/VR.

- Action Recommendations:

- Enterprises with edge inference needs should initiate AI Grid pilot evaluations as soon as possible, prioritizing adaptation for latency-sensitive services.

- Telecom carriers can proactively engage with NVIDIA for cooperation, preemptively planning edge computing upgrades to capture new revenue growth points.

- AI developers need to adapt to the distributed inference framework and can access the global distributed inference resources in advance via the NVIDIA NGC platform.

Judgment 2: NVIDIA consolidates its leadership in AI hardware and software ecosystems through AI Grid but faces differentiated competition from cloud providers and edge computing rivals.

- Confidence Level: Medium

- Importance Explanation: Market competition will drive rapid iteration of distributed inference technology. It is expected that unit inference cost will decrease by 45% in 2027 compared to 2026, ultimately benefiting the entire AI industry.

- Action Recommendations:

- Continuously track the progress of NVIDIA's partnerships with carriers, focusing on the actual implementation results of the 100+ city commercial deployment.

- Monitor distributed edge inference response solutions launched by cloud providers and the ecosystem integration efforts of edge computing vendors.

VII. Questions for Further Research

- What are the details of AI Grid's security and privacy protection mechanisms? Official disclosures regarding encryption schemes for data transmission and computing execution, as well as compliance certifications, are currently unavailable.

- What is the progress on cross-carrier network interoperability and standardization? Current pilots are deployed within single carriers. Technical standards and settlement mechanisms for cross-network scheduling are not yet defined.

- Challenges regarding long-term operational costs and scalability? Based on technical principles, operational costs for state synchronization and fault recovery across a large-scale deployment of nodes may increase significantly. No quantitative data is available yet.

- Potential impact on other AI chip vendors (e.g., AMD, Intel)? Currently, AI Grid only supports the CUDA ecosystem. Future plans for opening up adaptation to other chips or compatibility with non-NVIDIA computing power have not been announced.

Analysis Limitations

All data in this report is sourced from NVIDIA's official white papers, technical papers, and partnership announcements. Independent third-party test data is not yet publicly available. Actual commercial performance requires further validation after large-scale deployment in Q3 2026. Current pilot data is based solely on NVIDIA's partner carrier sample. Base station computing idle rates vary significantly among carriers in different global regions and development stages. Actual implementation results may deviate from pilot findings. The 2027 prediction of covering 30% of edge inference demand is based on the assumptions of "partnering with the top 50 carriers" and "achieving 40% penetration per carrier," which carries the risk of underperformance.Why it Matters

Positioning: Ecosystem Expansion, opening new markets by integrating telecom network resources.

Key Factor: Core Barrier: Deep integration of telecom network infrastructure with the CUDA computing ecosystem, forming a closed loop of "network + computing + scheduling." Through SRv6, network slicing, BlueField-4 hardware, and CUDA dynamic multiplexing technology, it achieves millisecond-level scheduling and efficient resource utilization across carrier edge nodes. This barrier is high-strength, making it difficult for cloud vendors or edge computing vendors to replicate its end-to-end network-native integration capability in the short term.

Stage: Peak of Inflated Expectations

DECISION

For Vendor (Cloud vendors (AWS, Google Cloud, Azure) and edge computing solution providers.)

- Immediately evaluate, launch, or strengthen their own "cloud-network fusion" edge inference services (e.g., AWS Outposts/Wavelength) to counter low-latency workload diversion.

- Accelerate investment or partnerships to promote non-CUDA ecosystem inference optimization solutions and open-source frameworks, addressing the risk of CUDA ecosystem lock-in.

Strategic Moves: Cloud vendors will accelerate cooperation with carriers to launch competing services.

- For latency-sensitive (<100ms) edge AI applications (e.g., quality inspection, AR), initiate small-scale AI Grid proof-of-concept, but conduct independent SLA testing.

- Evaluate AI Grid's multi-model access capabilities and plan to migrate some non-core, low-latency inference workloads from the cloud to the edge to optimize cost structure.

Action Guidance: Pilot

For Investor

- Monitor the actual progress of NVIDIA's cooperation with carriers (100+ city deployment) and utilization data to validate the business model.

- Pay attention to startups with technical expertise in cross-network scheduling, edge AI security, and heterogeneous computing management.

Key Risk: Technology Implementation Risk: Actual performance and cost advantages heavily depend on carrier network quality and compute idle rates, posing a risk of underperformance.

PREDICT

6 months (High confidence)

The first 12 carrier pilots will release more independent third-party test data, but performance figures may be lower than NVIDIA's official claims.

1 year (High confidence)

Major cloud vendors will launch carrier-edge inference solutions deeply integrated with their cloud services, directly competing with AI Grid.

2 years (Medium confidence)

Cross-carrier network interoperability and settlement standards will become a key bottleneck hindering AI Grid's large-scale expansion, with progress likely slow.

3 years+ (Medium confidence)

Distributed inference will form a stable complementary landscape with centralized cloud inference, capturing approximately 25-35% of the edge inference market share.

💬 Comments (0)