A Panoramic View of AI Inference Optimization Tools: From vLLM to TensorRT-LLM, A Selection Guide in a Fragmented Landscape

Evaluation Background

In April 2026, NVIDIA released TensorRT-LLM v0.16, which achieved a 32% throughput improvement over v0.15 for FP8 inference on H200 and added a vLLM compatibility interface [Source: NVIDIA whitepaper]. Concurrently, the arXiv paper "Performance Analysis of Mainstream LLM Inference Frameworks" (2026-04-10) provided a quantified comparison of five types of tools. The AI inference market is evolving from a focus solely on hardware performance to a comprehensive competition involving hardware, software ecosystems, and deployment flexibility. This evaluation aims to provide a data-driven decision basis for enterprise selection by quantitatively analyzing mainstream solutions—vLLM, TensorRT-LLM, Intel Gaudi3 toolchain, etc.—based on a unified third-party benchmark across five core dimensions: throughput vs. latency trade-off, multi-GPU scalability, hardware affinity, ecosystem lock-in risk, and operational complexity. All vendor-claimed data are cited with sources and their limitations explained.Product Overview

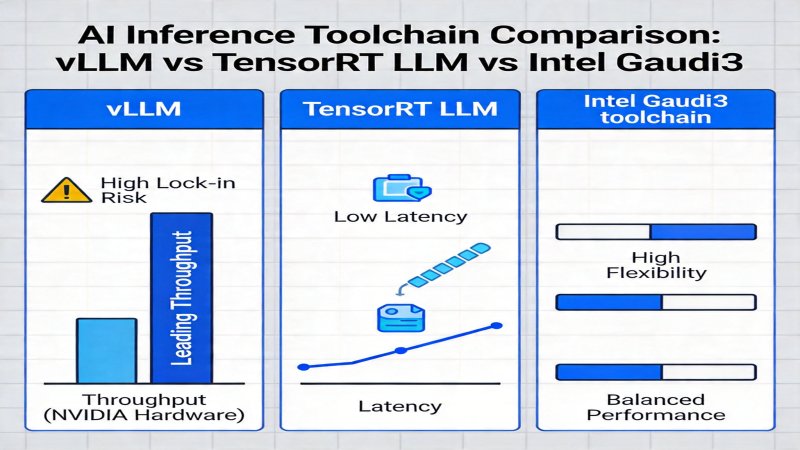

The current market presents three main approaches, each with distinct positioning and areas of advantage.- vLLM

- Positioning: Open-source inference optimization framework, focusing on low latency and flexibility, supporting custom scheduling.

- Core Features: Streamed inference latency optimization, compatibility with various hardware, open-source ecosystem, high production SLO attainment rate.

- Vendor: OpenAI.

- Latest Development: According to OpenAI's optimization practices from February 2026, batch processing throughput can be increased by 24% through custom scheduling logic, with pipeline parallel efficiency reaching 92% on a 32-card cluster [Source: OpenAI blog].

- TensorRT-LLM

- Positioning: NVIDIA's official inference optimization tool, deeply optimized for NVIDIA GPUs, focusing on high throughput and hardware performance.

- Core Features: FP8/INT4 quantization support, efficient multi-GPU parallelism, tight binding with CUDA cores, enterprise-grade SLO guarantees.

- Vendor: NVIDIA.

- Latest Development: The v0.16 version released in April 2026 shows a 32% throughput improvement over v0.15 for FP8 inference on H200, an 18% reduction in streamed latency, and adds a vLLM compatibility interface [Source: NVIDIA whitepaper].

- Intel Gaudi3 Toolchain

- Positioning: Inference toolchain adapted for Gaudi3 hardware, emphasizing open-source compatibility and cost-effectiveness.

- Core Features: Full compatibility with open-source tool stacks (e.g., vLLM), high throughput compared to H100, no vendor lock-in risk, good tensor parallel scalability.

- Vendor: Intel.

- Latest Development: A March 2026 whitepaper indicates that under vLLM deployment, Gaudi3 achieves 12% higher throughput than H100 with comparable latency, supporting linear scaling across 8 cards [Source: Intel whitepaper].

- Data Note: This is Intel's unilateral claim. Test conditions: Llama3-70B model, FP16 precision, input/output length of 512 tokens each, batch size 8. As the comparison target is H100 and test conditions differ from the third-party benchmark, this data is not used as a core basis in subsequent cross-comparisons, only reflecting Gaudi3's potential under specific open-source stacks.

Core Capability Comparison

Core comparison is based on the third-party evaluation "Performance Analysis of Mainstream LLM Inference Frameworks" (arXiv:2604.07892, 2026-04-10). Unified test conditions: Llama3-70B model, H100 hardware, FP16 precision, unless otherwise specified. Data from the latest vendor versions serves as supplementary reference.| Evaluation Dimension | vLLM | TensorRT-LLM | Intel Gaudi3 Toolchain |

|---|---|---|---|

| Throughput vs. Latency Trade-off | P99 latency in streaming scenarios (batch=1) is 15% lower than TensorRT-LLM. Throughput in batch processing scenarios (batch=16) is lower than TensorRT-LLM. | Throughput in batch processing scenarios (batch=16) is 27% higher than vLLM. Latency optimization in streaming scenarios is ongoing (v0.16 shows 18% latency reduction on H200 FP8). | Vendor Claim: Under vLLM deployment, Gaudi3 throughput is 12% higher than H100 with comparable latency (based on FP16, batch=8). |

| Multi-GPU Scalability | 86% tensor parallel efficiency on a 16-card cluster (H100), 92% pipeline parallel efficiency on a 32-card cluster [Source: OpenAI blog]. | 91% tensor parallel efficiency on a 16-card cluster (H100), supports tensor and pipeline parallelism. | Vendor Claim: Supports linear tensor parallel scaling across 8 cards (Gaudi3), 94% pipeline parallel efficiency. |

| Hardware Affinity | Compatible with various hardware (NVIDIA/AMD/Intel, etc.), low adaptation cost. | Deeply optimized for NVIDIA H100/H200, shows 32% FP8 throughput improvement on H200 [Source: NVIDIA whitepaper]. | Optimized for Gaudi3, deployed via open-source stacks like vLLM, high compatibility. |

| Ecosystem Lock-in Risk | Open-source solution, no vendor lock-in risk. | Bound to NVIDIA hardware and CUDA ecosystem, high ecosystem lock-in risk. | Based on open-source tool stacks, no vendor lock-in risk. |

| Operational Complexity | SLO availability 99.95%, relatively lower manpower cost. | SLO availability 99.99%, but manpower cost is 30% higher than vLLM [Source: Cisco guide]. | Operational complexity is moderate, based on open-source tools. |

- Third-party Benchmark: Core comparative data is primarily integrated from the third-party study "Performance Analysis of Mainstream LLM Inference Frameworks" (arXiv:2604.07892, 2026-04-10). This paper conducted controlled variable tests on tools like vLLM and TensorRT-LLM under H100, FP16 conditions, with a relatively objective methodology.

- Vendor Data: Data labeled as "Vendor Claim" originates from respective company whitepapers. It has reference value but requires attention to its bias and different testing baselines. Enterprises are advised to conduct their own POC validation.

- Cisco Guide: The referenced TCO and manpower cost data comes from the "Cisco Data Center AI Inference Selection Guide" (2026-03-22). The "30% higher manpower cost" is an estimate based on enterprise operations team size. Its conclusion of a "22% reduction in comprehensive TCO" is based on specific assumptions: a pure NVIDIA hardware environment, TensorRT-LLM amortizing hardware depreciation and power costs through higher throughput, and a specific software licensing cost model. This data is a case reference, not a universal value.

Applicable Scenario Analysis

Recommended Scenarios- H200 or Pure NVIDIA GPU Environment: Prioritize TensorRT-LLM. Its deep hardware optimization maximizes performance release (e.g., 32% FP8 throughput improvement on H200). Selection Action: On the target hardware, test throughput and P99 latency at batch sizes [4, 16, 32] under FP8 precision using typical business prompts.

- Mixed Hardware Deployment or Flexible Adaptation Scenarios: Prioritize vLLM. Its hardware compatibility significantly reduces adaptation costs, and its open-source nature facilitates customization. Selection Action: Deploy vLLM on various hardware types, using the lm-evaluation-harness framework to verify performance consistency and SLO attainment rates across different hardware.

- Gaudi3 Hardware Environment: Choose the Intel Gaudi3 Toolchain. It offers the flexibility of an open-source ecosystem while pursuing performance comparable to mainstream GPUs. Selection Action: Strictly reproduce the Intel whitepaper test conditions and conduct a comparative POC with an existing NVIDIA cluster using the same model and configuration to verify its claimed cost-performance advantage.

Not Recommended Scenarios

- Non-NVIDIA Hardware Environments: Avoid using TensorRT-LLM. Its deep binding to the CUDA ecosystem will lead to performance loss and compatibility issues if forced to adapt.

- High Latency-Sensitive Streaming Scenarios (e.g., real-time dialogue): Avoid tools optimized solely for batch processing. Prioritize testing proven low-latency solutions like vLLM, focusing on P99 latency metrics.

- Scenarios with Limited Operational Resources: Avoid high-complexity solutions like TensorRT-LLM. The additional 30% manpower cost (from the Cisco guide, based on enterprise ops team size estimate) may become an operational burden.

Key Judgments

Based on current data and verifiable trends, the following key judgments are formed:| Key Judgment | Confidence | Importance | Specific Action Recommendations |

|---|---|---|---|

| TensorRT-LLM leads in performance on NVIDIA hardware but carries high ecosystem lock-in risk, suitable for single-hardware environments; vLLM shows clear advantages in flexibility and latency, suitable for mixed-deployment scenarios. | High | Helps enterprises choose the optimal tool based on hardware and scenario, balancing performance and risk. | Enterprises should base tool selection on hardware type, throughput/latency requirements, and operational capabilities, prioritizing testing in actual scenarios. Specific testing method: Use a dataset of typical business prompts, test candidate tools on target hardware for throughput, P99/P95 latency at batch sizes [1, 4, 16], and record GPU utilization and memory consumption. |

| The performance gap between open-source tools (e.g., vLLM) and specialized solutions (e.g., TensorRT-LLM) persists, but the compatibility of open-source ecosystems is becoming a key competitive dimension. | High | Impacts long-term technology investment decisions, avoiding vendor lock-in. | While pursuing ultimate performance, evaluate ecosystem lock-in risks and monitor compatibility progress between tools. Evidence: TensorRT-LLM v0.16's new vLLM compatibility interface indicates specialized solutions are attempting to integrate into open-source ecosystems; however, current third-party benchmarks show a 27% throughput gap in batch processing under unified conditions (H100, FP16). Performance catch-up by open-source tools is a long-term process. |

Selection Recommendations

Facing the fragmented tool landscape, enterprises are advised to follow these steps for decision-making:- Define Hardware Baseline: First, identify the core inference hardware (NVIDIA/Intel/other) for the present and the next 1-2 years. This is the primary constraint for selection.

- Quantify Performance Requirements: Through POC testing, clarify the business's sensitivity to throughput, latency, and SLA. For example, real-time dialogue applications need to prioritize testing streaming latency.

- Evaluate Long-term Costs: Comprehensively calculate the Total Cost of Ownership (TCO), including software licensing (if any), adaptation development, operational manpower, and hardware procurement. Carefully assess the applicability of external TCO reports (like Cisco's 22% reduction) based on one's own situation.

- Adopt a Layered Strategy: For core, stable production workloads, use TensorRT-LLM to pursue ultimate performance; for experimental, multi-hardware, or rapidly iterating scenarios, use vLLM to ensure flexibility.

- Continuously Track the Ecosystem: Closely monitor future version updates like TensorRT-LLM v0.17 to see if they further open the ecosystem or change the performance landscape. Simultaneously, follow the evolution of other mainstream tools (e.g., TGI, DeepSpeed) to keep options open.

Outstanding Issues Note:

- Regarding "specific performance data for other mainstream inference tools," this evaluation focuses on the three core tools. Detailed quantitative data for other tools (e.g., TGI, Triton Inference Server) require reference to broader benchmark reports.

- Regarding "differences in enterprise-grade SLO guarantees in actual production environments," the difference between 99.99% and 99.95% availability translates into significantly different service interruption times in ultra-large-scale, high-concurrency scenarios. Enterprises need to evaluate based on their business tolerance.

- Regarding "whether future version updates will change the landscape," continuous attention is needed on the depth of TensorRT-LLM's compatibility with the open-source ecosystem and the progress of open-source tools like vLLM in quantization and ultimate performance optimization.

Why it Matters

Positioning: Duopoly

Key Factor: The core differentiator lies in the fundamental trade-off between performance and ecosystem/flexibility. NVIDIA, with TensorRT-LLM, has built a significant performance moat (e.g., 27% higher batch throughput) in pure NVIDIA hardware environments (especially H100/H200) through deep hardware binding and vertical software stack integration. vLLM, on the other hand, has formed strong ecosystem appeal in mixed-hardware deployment and flexible adaptation scenarios through open-source, hardware compatibility, and low-latency advantages. Intel Gaudi3, as a challenger, enters the market with open-source compatibility and claimed cost-performance, but its performance data lacks unified benchmark verification, limiting its current market influence. The current landscape is a direct competition between NVIDIA's 'performance-first' route and OpenAI's 'open-ecosystem' route.

Stage: Growth Phase

DECISION

Decision recommendations are available for Pro users

Upgrade to Pro $29/moPREDICT

Prediction verification is available for Pro users

Upgrade to Pro $29/mo