Event: Interpreting the Six-Company AI Contract Procurement Architecture

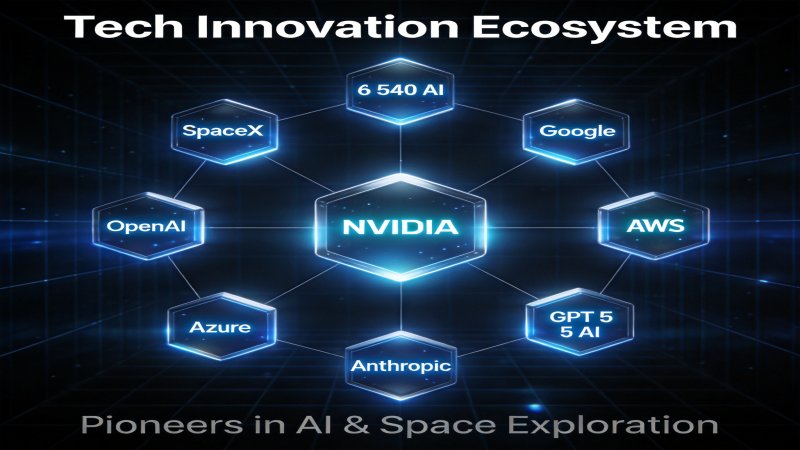

On May 1, 2026, the US Department of Defense officially announced landmark AI integration agreements with six technology companies, with a total contract value of $54 billion. The six companies are: Google, OpenAI, NVIDIA, AWS, Microsoft, and SpaceX. This announcement triggered strong reactions across both the AI industry and defense sector, as it marks the first time the US military has systematically integrated AI capabilities into its core combat and intelligence systems.

Examining the procurement architecture, this contract is not a simple technology purchase but a carefully designed vertically integrated AI stack contract. Rather than selecting a single AI solution vendor, the DoD chose specialized suppliers for different layers of the AI technology stack:

- SpaceX: Satellite communications and global connectivity

- NVIDIA: Core computing power for AI training and inference

- AWS/Azure: Cloud infrastructure and distributed computing

- Google/OpenAI: AI model capabilities

This tiered procurement strategy ensures the DoD won't be locked into any single vendor while leveraging each company's best capabilities in their respective specialties.

Vertically Integrated AI Stack: Every Layer from Satellite to Model

Layer 1: SpaceX's Global Connectivity Layer

SpaceX's role in the Pentagon AI Stack extends far beyond a traditional contractor. The Starlink satellite network provides ubiquitous, low-latency global connectivity for AI systems. In modern warfare, real-time intelligence transmission and edge computing capabilities are critical. SpaceX's satellite network enables AI systems to operate anywhere globally, whether in data centers or on battlefields.

This capability is essential for "distributed AI warfare":

- Battlefield Edge AI: Deploy AI inference capabilities anywhere Starlink coverage exists

- Real-time Situational Awareness: Sensor data transmitted in real-time via Starlink to AI analysis systems

- Resilient Communications: Even if partial ground infrastructure is destroyed, AI systems maintain connectivity via satellite

Layer 2: NVIDIA's Computing Layer

NVIDIA's position in the Pentagon AI Stack is irreplaceable. As the core computing power supplier for AI training and inference, NVIDIA's GPUs form the fundamental basis for all advanced AI models. From GPT-5.5 to Claude Mythos, training these models depends on NVIDIA's H100 and B200 series GPUs.

The Pentagon chose NVIDIA because:

- Technical Leadership: NVIDIA's AI training and inference leadership is uncontested

- CUDA Ecosystem: NVIDIA's CUDA ecosystem provides the most comprehensive AI development toolchain

- Security Compliance: NVIDIA has passed multiple US government security certifications

- Supply Chain Control: As a US-based company, NVIDIA ensures supply chain security

Layer 3: AWS/Azure Cloud Infrastructure Layer

AWS and Microsoft Azure jointly provide the cloud infrastructure layer for the Pentagon AI Stack. Their roles include:

- Distributed Computing: Scalable computing resources for AI training and inference

- Data Management: Processing and storing massive data from various sensors

- Secure Isolation: Isolated computing environments meeting military security requirements

- Global Coverage: Data centers worldwide ensuring low-latency AI system access

The DoD's simultaneous use of both AWS and Azure, rather than a single vendor, reflects its emphasis on supplier diversity and resilience.

Layer 4: Google/OpenAI Model Layer

Google and OpenAI were selected to provide AI model capabilities—the most strategically valuable layer in the Pentagon AI Stack. These two companies' model capabilities represent the cutting edge of current AI technology:

- OpenAI: GPT-5.5 series models, particularly GPT-5.5-Cyber's specialized capabilities in cybersecurity

- Google: Gemini series models, with advantages in multimodal understanding and long-context processing

Significantly, the recent cybersecurity capability breakthroughs of both models (TLO testing) served as a driving force behind the Pentagon's procurement decision. AI models capable of passing complex network intrusion tests possess abilities relevant to cyber warfare operations.

Google's 8-Year Transformation: From Maven Protests to Military AI

Google's signing of this military AI contract in 2026 marks a fundamental shift in the company's relationship with the US military. Recounting history, this transformation came at a price:

2018: The Project Maven Controversy

In 2018, Google's participation in the US DoD's Project Maven (using AI to analyze drone surveillance footage) triggered intense internal protests. Thousands of employees signed an open letter demanding Google immediately terminate any AI cooperation with the military. Eventually, Google committed to not renewing the Project Maven contract and published AI ethics guidelines in 2019 that explicitly limited AI weaponization applications.

Turning Point: Anthropic's "Vacated" Position

As Anthropic was excluded from the Pentagon AI supply chain due to its refusal of autonomous weapons and mass surveillance, Google saw a strategic opportunity to fill this gap. Meanwhile, OpenAI, despite technical leadership, faced similar ethical controversies. Google believed that by demonstrating responsible military AI applications, it could find balance between national security and AI ethics.

2026: Official Contract Signing

Google's new contract with the Pentagon explicitly defines AI usage boundaries:

- Prohibited from lethal autonomous weapon decision-making

- Restricted to non-lethal tasks like target identification and situational analysis

- All AI decisions require human oversight

- Independent AI ethics review board established

This transformation reflects the complex negotiation between commercial interests and ethical stances for major technology companies. For Google, the opportunity cost of losing the defense contract could reach billions of dollars.

Anthropic's Exclusion: The Business Cost of Ethical Stance

Anthropic's exclusion from the Pentagon AI contract is the most controversial decision in this analysis. Anthropic's explicitly stated positions made it the "spokesperson" for AI ethics but came with significant business costs.

Anthropic's Ethical Framework

Anthropic's AI ethics stance can be summarized in these core principles:

- Opposition to Autonomous Weapons: Claude should not be used to make or support lethal use-of-force decisions

- Opposition to Mass Surveillance: AI systems should not be used for indiscriminate mass citizen surveillance

- Explainability Requirements: Military AI system decision processes must be explainable

- Human Primacy: AI should always remain under human supervision in life-and-death military decisions

Business Costs

The costs of Anthropic's ethical stance are concrete:

- $54 Billion Contract: Anthropic was completely excluded

- Strategic Lockout Risk: Being excluded from the Pentagon AI supply chain may cause its technology trajectory to diverge from mainstream defense ecosystems

- Talent Attraction Changes: Some developers may prefer joining Anthropic because of its ethical stance, while some investors hold reservations due to limited commercial prospects

Strategic Choice

Anthropic CEO Dario Amodei has repeatedly stated publicly that the company is willing to accept "the business cost of ethical stance." This statement is both a values declaration and a business strategy positioning—Anthropic is becoming the brand representative for "responsible AI," attracting enterprises and institutions that equally value AI ethics.

The long-term effectiveness of this "ethics premium" strategy remains to be seen. In the short term, Anthropic may find alternative growth paths in the civilian AI market; but long-term, if military AI becomes one of the AI industry's largest revenue sources, Anthropic's stance may face increasing commercial pressure.

The Fundamental Challenge of Probabilistic Models in Deterministic Systems

The Pentagon AI Stack faces a fundamental technical philosophy question: How to deploy probabilistic AI models in military systems that depend on determinism?

Inherent Limitations of Probabilistic Models

Modern AI models, including GPT-5.5 and Claude Mythos, are probabilistic statistical language models. This means:

- Output Uncertainty: Same inputs may produce different outputs

- Hallucination Risk: AI may generate information that appears reasonable but is actually incorrect

- Adversarial Vulnerability: Carefully crafted inputs may cause AI to produce unexpected outputs

In civilian scenarios, these limitations may cause inconvenience; in military scenarios, they could lead to catastrophic consequences.

Special Requirements for Life-Critical Systems

Military and defense systems have reliability requirements far exceeding civilian systems:

| Requirement | Civilian AI System | Military AI System |

|---|---|---|

| Response Time | Seconds acceptable | Milliseconds required |

| Reliability | 99% acceptable | 99.999% required |

| Explainability | Preferred | Mandatory |

| Failure Consequences | User experience decline | Lives at stake |

| Verification Standard | Market testing | Government certification |

Current Solutions

The Pentagon's response strategy is the "Human-in-the-Loop" model:

- AI provides analytical recommendations, humans retain final decision authority

- AI outputs require human review before execution

- Critical decisions completely exclude AI involvement

This approach is pragmatic short-term, but it also limits AI systems' effectiveness in actual combat. If AI recommendations always require human verification, the advantage of AI-accelerated decision-making is significantly diminished.

Technology Development Directions

Technical directions for resolving the tension between probabilistic models and deterministic systems include:

- Explainable AI: Improve transparency of AI decision processes, enabling humans to better understand and verify AI recommendations

- Confidence Calibration: Enable AI to accurately express confidence in output correctness, allowing humans to decide whether to adopt based on this information

- Adversarial Robustness: Improve AI model resistance to malicious inputs

- Hybrid Architectures: Combine probabilistic AI with traditional rule-based systems, prioritizing deterministic rules in high-risk scenarios

Deep Impact on AI Industry Landscape

The Door to Defense AI Market Officially Opens

The $54 billion Pentagon contract is not only the largest AI government procurement contract to date but more importantly establishes the framework and standards for defense AI procurement. This framework will influence the design of all future defense AI contracts.

Tiered Procurement Becomes Standard: The Pentagon's tiered procurement strategy may become the standard paradigm for defense AI procurement. Defense departments in other countries and regions may emulate this model.

Compliance Requirements Become Barriers: Anthropic's exclusion indicates AI vendors' ethical stances may become barriers to entering defense markets. This will force AI vendors to make explicit choices between commercial interests and ethical positions.

AI Arms Race Enters New Phase

The establishment of the Pentagon AI Stack marks AI militarization's transition from theory to practice. Other countries may be compelled to accelerate their own defense AI programs to counter US technological advantages.

Geopolitical Impact:

- China may increase investment in AI militarization

- Europe may develop independent defense AI capabilities

- Smaller nations may seek AI asymmetric advantages to compensate for conventional military gaps

Industry Consolidation Accelerates

The $54 billion mega-contract will accelerate AI industry consolidation:

- Winner-Takes-All: AI vendors entering the defense supply chain will gain stable revenue sources and valuable combat data

- Accelerated Elimination: AI vendors excluded from the defense market may face greater survival pressure

- Active M&A: M&A activity in the defense AI sector will increase significantly

Strategic Recommendations

For AI Vendors

Contracted Vendors (Google/OpenAI/NVIDIA/AWS/Microsoft/SpaceX):

- Invest in building dedicated defense compliance teams

- Develop product features meeting military AI ethics requirements

- Explore "responsible military AI" brand positioning

Non-Contracted Vendors (especially Anthropic):

- Evaluate long-term strategic value of the defense market

- Find alternative growth paths in civilian AI markets

- Build "ethical AI" brand differentiation

For Enterprise Decision-Makers

- Monitor spillover effects of defense AI technology to civilian markets

- Assess geopolitical risks in AI supply chains

- Prepare for regulatory and compliance changes brought by AI militarization

For Investors

- Defense AI suppliers will gain significant valuation premiums

- "AI + Defense" may become the next hot investment theme

- Monitor policy risks, especially regulatory responses that international AI militarization may trigger

The establishment of the Pentagon AI Stack is a watershed event in AI development history. It not only opens the door to the defense AI market but reshapes the strategic choice space for AI vendors. In this new AI arms race, balancing technical capabilities, commercial interests, and ethical stances will become the critical variable determining success or failure.

Why it Matters

The $54B Pentagon AI contract is not only the largest AI government procurement to date but marks AI militarization's transition from theory to practice. This contract establishes the framework and standards for defense AI procurement, influencing all future relevant procurements. The vertical integration from SpaceX to OpenAI represents a new paradigm for AI warfare systems. Anthropic's exclusion indicates ethical stance is becoming a hidden barrier to market access. The challenge of applying probabilistic AI models in deterministic military systems may catalyze next-generation explainable AI and hybrid architecture technologies.

DECISION

AI Vendor Strategy: Contracted vendors need to invest in defense compliance teams and build product features meeting military AI ethics requirements. Non-contracted vendors like Anthropic need to assess long-term value of defense market and build "ethical AI" brand differentiation.

Enterprise Decision-Maker Strategy: Monitor spillover effects of defense AI technology to civilian markets. Assess geopolitical risks in AI supply chains. Prepare for regulatory changes brought by AI militarization.

Investor Strategy: Defense AI suppliers will gain significant valuation premiums. "AI + Defense" may become the next hot investment theme. Monitor policy risks and international regulatory responses to AI militarization.

PREDICT

Within 12 months, other countries will announce similar defense AI procurement plans. GPT-5.5 and Claude Mythos capabilities will continue iterating, with the Pentagon possibly incorporating AI attack capabilities into supplier qualification standards. Explainable AI and hybrid architecture technologies will become investment hotspots. Anthropic may find alternative paths in civilian markets but faces long-term growth ceiling.

💬 Comments (0)