In-Depth Analysis of Cerebras IPO: A New Landscape of Diversified Competition in the Computing Power Market

1. Background and Overview

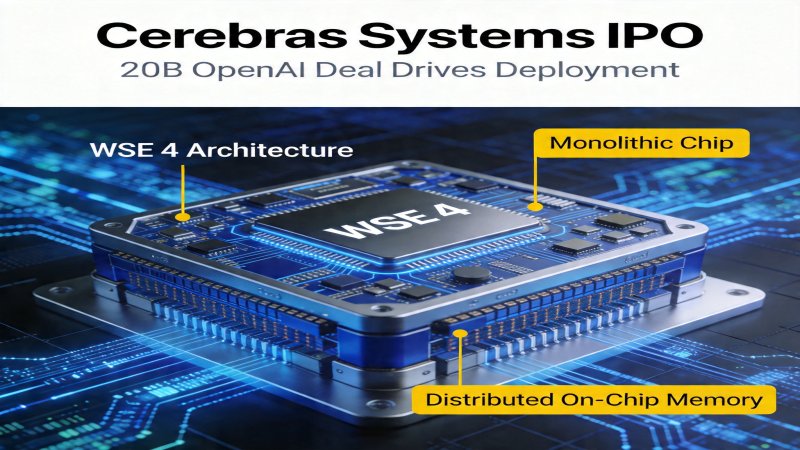

On April 15, 2026, AI computing power company Cerebras Systems officially filed its IPO prospectus with NASDAQ, aiming to raise $15 billion with a valuation exceeding $70 billion. The core driver of this IPO is a $20 billion, 7-year cooperation agreement signed with OpenAI, planning to deploy a total of 750 megawatts of WSE chip clusters by 2030 to handle inference tasks for large models like GPT-5. This marks the first large-scale commercial validation of its "Wafer-Scale Engine" technology route by a top-tier customer, and an attempt to carve out a differentiated competitive path in a market dominated by NVIDIA.Core Concepts:

- Wafer-Scale Engine (WSE): Designs and manufactures an entire wafer (e.g., 46,225 mm²) as a single chip, rather than dicing it into multiple discrete chips, aiming to overcome the fundamental bottlenecks in interconnect bandwidth and latency inherent in traditional multi-chip solutions.

- Distributed Shared Memory: The core feature of the WSE architecture, eliminating external high-bandwidth memory (HBM) and integrating large-capacity SRAM (44GB for WSE-4) distributed across the wafer. Computing cores access this memory directly via a high-speed on-chip network, significantly reducing data movement latency and power consumption.

Evolution Context: Traditional GPUs address computing power growth by stacking HBM and using advanced packaging (e.g., CoWoS), but face challenges like the "memory wall" and diminishing returns in cluster scaling efficiency. Since launching its first-generation WSE in 2019, Cerebras has adhered to the full-wafer integration route, focusing on solving the scaling challenges of ultra-large models. This IPO and the release of WSE-4 represent a key turning point where its technological path transitions from R&D validation to large-scale commercial deployment and capital expansion.

Why Now: According to the prospectus, Cerebras' 2025 revenue reached $12.8 billion, a 217% year-over-year increase. Its fourth-generation product, WSE-4, explicitly targets large model inference scenarios, coinciding with a global inflection point of explosive demand for AI inference computing power and market search for NVIDIA alternatives. According to a Wall Street Journal analysis on April 18, 2026, the global AI inference computing power market is projected to reach $1.2 trillion in 2026, providing a vast market space for challengers like Cerebras.

2. Architecture Layers

The Cerebras WSE-4 system employs a three-layer architecture designed to maximize on-chip data flow efficiency and minimize reliance on external HBM and complex interconnects.- On-Chip Compute Layer: Integrates 900,000 AI-optimized compute cores supporting mixed-precision calculations (FP8, FP16, BF16), designed for computational patterns of large models like Transformers.

- On-Chip Memory & Interconnect Layer: This is the differentiated core of the WSE architecture. The 44GB SRAM is distributed across the wafer, tightly coupled with compute cores via an ultra-high 1.2PB/s bandwidth. All cores are interconnected via a two-dimensional mesh high-speed on-chip network, enabling extremely low-latency communication.

- System Cluster Layer: A single CS-4 system already possesses powerful computing capabilities. Multiple CS-4 systems can form larger-scale clusters via external networks (e.g., InfiniBand). The software stack includes cluster management tools and inference frameworks co-optimized with customers (e.g., OpenAI), responsible for efficiently mapping large models onto the entire hardware system.

3. Key Technologies

3.1 Wafer-Scale Integration Technology

- Problem Solved: Traditional solutions form clusters using multiple GPU chips interconnected via PCIe or NVLink, where the bandwidth (typically hundreds of GB/s to TB/s) and latency are far higher than intra-chip communication, becoming the main bottleneck for scaling computing power.

- Core Principle: Cerebras manufactures the entire wafer (based on TSMC's 5nm process, area 46,225 mm²) as a single chip, integrating 4.2 trillion transistors. All 900,000 compute cores are directly interconnected via an on-chip 2D Mesh network with communication bandwidth up to 1.2PB/s and latency reduced to the nanosecond level. This is equivalent to "condensing" the communication network of a super-large-scale computing cluster onto a single wafer.

- Measured Effect: According to a third-party academic evaluation published in October 2025 (arXiv:2510.03472), in a 70B parameter large model inference task, a WSE-3 cluster composed of 12 CS-3 systems achieved a linear scaling efficiency (i.e., the performance improvement ratio from adding hardware resources) as high as 94%. In comparison, an NVIDIA H100 cluster with equivalent peak computing power interconnected via InfiniBand achieved only 68% scaling efficiency. WSE-4's on-chip network is further optimized, expected to maintain or enhance this advantage.

3.2 Distributed Shared Memory Architecture

- Problem Solved: The "memory wall" problem. The performance of GPU compute units is limited by the speed and power consumption of reading data from external HBM. Data movement between HBM and compute cores consumes significant time and energy, becoming a major bottleneck, especially in inference scenarios sensitive to latency and energy efficiency.

- Core Principle: The WSE architecture completely abandons external HBM, integrating 44GB of high-capacity SRAM memory distributed across the wafer, adjacent to the compute cores. Each compute core can access memory with extremely high bandwidth (1.2PB/s) and very short physical distances. During inference, model parameters can be loaded into on-chip memory once, and subsequent data movement for computation is almost entirely completed on-chip.

- Measured Effect & Limitations: According to the Cerebras WSE-4 technical whitepaper, for typical large model inference workloads, its energy efficiency (performance/watt) is claimed to be 6.8 times that of NVIDIA's H100, with a 42% lower total cluster deployment cost. (Note: There is currently a lack of public, conditionally equivalent third-party benchmark tests (e.g., MLPerf Inference) to verify this data. This performance advantage claim is currently only a vendor assertion; its actual benefits require independent verification under equivalent optimization levels and specific workloads.)

4. Principle and Workflow

The following illustrates the workflow of the WSE architecture using the example of processing one large model inference request.- Model Loading & Partitioning: The inference framework partitions and intelligently maps the large model parameters into the distributed 44GB SRAM based on the physical layout of compute cores and memory on the WSE wafer. Thanks to the 1.2PB/s on-chip memory bandwidth, this loading process is much faster than loading from GPU memory.

- Request Processing & Computation: The user inference request (Prompt) is fed into the system and distributed by the framework to specific cores on the wafer. Computation tasks are dynamically scheduled and flow among tens of thousands of cores via the high-speed on-chip network. When each core executes computation, the required data is fetched from the distributed memory adjacent to it, achieving extremely low-latency data supply.

- Result Aggregation & Return: Intermediate results produced by all compute cores are reduced and aggregated via the efficient on-chip network, finally forming the complete output sequence (Response), which is returned to the user. Throughout the process, data predominantly flows at high speed within the wafer.

5. Competitive Landscape Analysis

5.1 Key Competitor Comparison

| Dimension | Cerebras | NVIDIA | AMD |

|---|---|---|---|

| Technology Route | Wafer-Scale "Giant Chip" + Distributed On-Chip Memory | Multi-Chip GPU + External HBM + NVLink/Advanced Packaging | Multi-Chip GPU + External HBM + CDNA Architecture |

| Core Advantages | 1. Extreme inference energy efficiency & low latency in specific scenarios 2. Ultra-high linear cluster scaling efficiency 3. Freedom from HBM supply chain dependency | 1. Dominant CUDA software ecosystem 2. Full coverage of training & inference scenarios 3. Strong mass production & supply chain capabilities | 1. Possesses general-purpose acceleration capability 2. Cost-performance advantage 3. Promotes open ecosystem (ROCm) |

| Main Disadvantages | 1. Software ecosystem richness far inferior to CUDA 2. Complex manufacturing, potential yield challenges 3. Fixed memory capacity limits model size 4. Currently highly dependent on a single customer (OpenAI) | 1. Reliance on expensive HBM, high cost 2. Low scaling efficiency for ultra-large-scale inference clusters 3. Faces energy efficiency challenges from specialized architectures | 1. Software ecosystem (ROCm) maturity still insufficient 2. Small market share in ultra-large-scale AI market 3. Also faces the "memory wall" problem |

| Scenario Focus | Large Model Inference (current focus) | Training Primarily, Inference Secondarily | Training & Inference |

5.2 Differentiation and Market Dynamics

Core Differentiation:- Fundamental Technology Route Difference: Cerebras follows a "giant chip" integration philosophy, pursuing extreme on-chip unified memory and interconnect; NVIDIA/AMD follow a "multi-chip + advanced packaging" philosophy, expanding capabilities via external high-bandwidth components.

- Scenario Focus Difference: Cerebras WSE-4 is explicitly optimized for energy efficiency and throughput in large model inference; NVIDIA GPUs must balance the different needs of training and inference, offering a general-purpose solution.

- Deployment & Business Model: Cerebras currently tends to deploy large-scale dedicated clusters in cooperation with top-tier customers (e.g., OpenAI, cloud providers); NVIDIA GPUs penetrate almost all data centers in the form of standard accelerator cards.

Market Dynamics:

The AI computing power market remains highly monopolized by NVIDIA, but its structure is changing. Demand for inference computing power is growing exponentially. Cerebras, leveraging its landmark partnership with OpenAI and capital from the IPO, is transitioning from the technology validation stage to mainstream market penetration. The market reaction is active: NVIDIA has responded defensively by launching inference-specific models and lowering service prices (Wall Street Journal reported a 22% reduction); leading cloud providers like AWS and Google Cloud have initiated WSE cluster testing, planning to launch commercial instances in Q4 2026. Cerebras' IPO and partnership with OpenAI introduce a new technological variable and a potential competitive option into the AI computing power market. However, declaring that a "one superpower, multiple strong players" landscape has already formed or is irreversible is premature; its ecosystem expansion capability and subsequent commercial deployment scale still require observation.

6. Key Judgments

| Key Judgment | Importance | Action Recommendations | Confidence Level |

|---|---|---|---|

| Cerebras' IPO marks the capital validation of its technology route, but its path to challenge NVIDIA depends on whether it can increase the revenue share from non-OpenAI customers to over 30% and successfully adapt its software stack to at least 3 mainstream AI frameworks within the next 24 months. | Provides the market with an early successful case of an alternative technology route, potentially stimulating more capital and talent to flow into the dedicated AI chip field, but unlikely to shake the dominance of the CUDA ecosystem in the short term. | 1. AI enterprises should begin evaluating the cost-benefit model of dedicated inference architectures as part of a supply chain diversification strategy. 2. Investors need to differentiate between technological validation and commercial-scale success, focusing on its customer diversification progress. | High |

| Cerebras' on-chip memory route shows significant energy efficiency potential for inference tasks of specific model sizes (parameters fitting within 44GB memory), but its fixed memory capacity constitutes an upper limit and fundamental constraint on model scale. | Reveals that precise matching between hardware architecture and software workload is key to achieving extreme efficiency, but also highlights the compromise this route makes on generality and scalability. | 1. Technical analysts need to assess the applicability boundaries of the WSE architecture based on specific model sizes and inference batch sizes. 2. Competitors need to specifically optimize the energy efficiency of their HBM systems for small-to-medium-scale inference. | Medium-High |

| The $20 billion partnership with OpenAI is a key support for Cerebras' IPO valuation, but this "binding" cooperation also brings high customer concentration risk; its commercial success still needs to be proven to a broader customer base. | OpenAI's partnership is a crucial technological validation and market endorsement, but Cerebras' long-term value depends on its ecosystem expansion capability—i.e., whether it can replicate more "OpenAI cases." | Closely track Cerebras' progress in securing orders from other major customers (e.g., other leading model companies, large cloud providers) post-IPO, as this is a key signal for the sustainability of its valuation. | High |

7. Open Research Questions

- Manufacturing & Supply Chain Bottlenecks: What are the specifics regarding the chip yield, manufacturing complexity, and supply chain relationships with foundries like TSMC for the WSE architecture? Are there bottlenecks in its large-scale production capacity? (Based on analytical inference: Treating an entire wafer as a single chip offers extremely low tolerance for manufacturing defects; yield control and cost are core challenges.)

- Training Scenario Capability: Beyond inference, what is the actual performance and scalability of the WSE architecture in AI training scenarios? Does it have the potential to challenge NVIDIA's training business? (Currently no public quantitative data; need to wait for Cerebras or third parties to release training benchmark results.)

- Software Ecosystem Challenge: How large is the gap in usability and ecosystem richness between Cerebras' software stack (especially compiler, operator library) and CUDA? How can it attract more developers beyond just serving top-tier clients? (Based on public information inference: Its software ecosystem is still in its early stages, constituting a major obstacle to expanding its customer base.)

- Infrastructure & TCO: What new requirements does the deployment of a 750-megawatt WSE cluster impose on data center infrastructure like power supply and cooling? Does its claimed Total Cost of Ownership (TCO) advantage still hold in broader deployment scenarios? (Requires more third-party deployment case validation; thermal solutions for ultra-high power density chips are key.)

Why it Matters

Positioning: Disruptive, wafer-scale integration challenges traditional multi-chip architecture.

Key Factor: The core competitive barrier is the on-chip ultra-high bandwidth and ultra-low latency enabled by wafer-scale integration, granting it theoretical advantages in energy efficiency and linear scaling for inference tasks with specific model sizes (parameters fitting within 44GB memory). However, the strength of this barrier is limited by its fixed memory capacity, manufacturing yield challenges, and immature software ecosystem, confining its advantages to highly focused scenarios with poor generality.

Stage: Peak of Inflated Expectations

DECISION

Decision recommendations are available for Pro users

Upgrade to Pro $29/moPREDICT

Prediction verification is available for Pro users

Upgrade to Pro $29/mo